Thinking in pipelines

Most AI writing tools give you a single prompt box. You type something, the model responds, and that is the entire interaction. It works for one-off tasks, but falls apart when you need a multi-step process where each stage builds on the previous one.

A pipeline is different. It is a sequence of operations where data flows from one step to the next, each step transforming or enriching the content before passing it along. Developers have used pipelines for decades in data processing. Ritemark Flows brings the same idea to writing, without requiring you to write code.

Anatomy of a flow

Every flow in Ritemark consists of three elements: a trigger, a set of processing nodes, and one or more outputs.

The trigger determines when the flow runs. You can trigger it manually with a button click, or configure it to run when a file changes in your project folder. Manual triggers are good for on-demand tasks. File-change triggers are useful for workflows that should run automatically whenever source material updates.

Processing nodes are where the work happens. Each node performs a single operation. An LLM Prompt node sends content to an AI model with your instructions and returns the response. A Claude Code node runs a code-level task, like reformatting a document structure or extracting metadata from frontmatter. An Image Generation node creates visuals from text descriptions. A File Operation node reads, writes, renames, or moves files in your project.

Outputs determine where results go. Most flows write to a file, but you can also send output to the clipboard, display it in a preview panel, or chain it into another flow.

Connecting nodes

The visual editor uses a canvas where each node appears as a card. Every card has input ports on the left and output ports on the right. You connect them by dragging a line from an output port to an input port.

When a flow runs, data moves through these connections in order. The first node produces output. That output becomes the input for the next node. If a node has multiple inputs, it waits until all of them have data before executing.

You can branch flows too. A single node's output can feed into two different paths. This is useful when you want the same source content to produce two different outputs, say a summary for social media and a full article for your blog.

Node types in detail

The LLM Prompt node is the most common. You write a system prompt and a user prompt, with placeholders for incoming data. When the node executes, it fills the placeholders with the actual content from the previous node and sends the complete prompt to your chosen model. You can pick Claude, GPT, Gemini, or any model accessible through an API key you have configured in Ritemark.

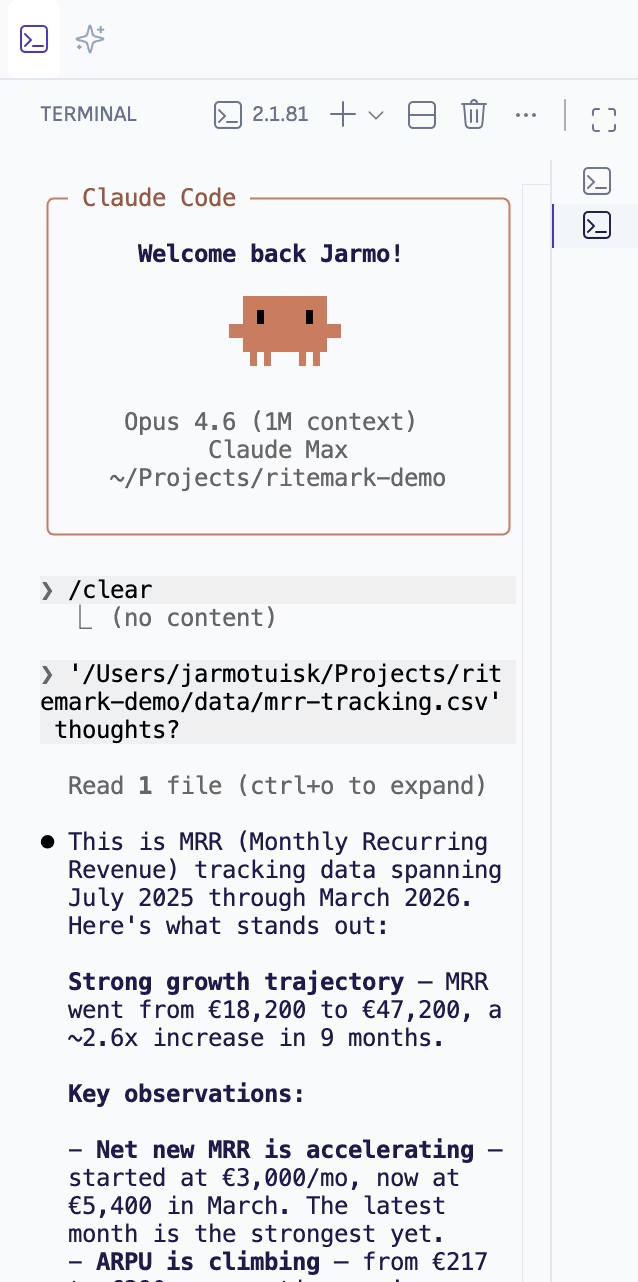

The Claude Code node is more specialized. It runs agentic coding tasks, things like "parse this Markdown file and extract all H2 headings into a JSON list" or "rewrite this file to match the formatting conventions in STYLE.md." It operates on your actual project files, so it can do structural work that a simple prompt cannot.

The File Operation node handles the mechanical parts. Read a specific file, write output to a path, copy a file to another location, or list all Markdown files in a directory. These nodes are the glue that connects your AI processing to your actual file system.

Building a real pipeline

Here is a concrete example. You want to take a rough draft, improve its structure, check it for tone consistency, and produce a final version.

Node one reads the draft file from your project. Node two sends it to Claude with instructions to reorganize the content into clearer sections with better transitions. Node three takes the restructured version and sends it to a different prompt that checks for tone, flagging any sections that feel too formal or too casual. Node four writes the polished result to a new file.

Four nodes, four connections, one click to run. The entire pipeline takes about thirty seconds to execute, depending on document length and model response time.

Local and private

Every flow runs on your machine. The visual editor, the execution engine, and the file operations all happen locally. LLM nodes make API calls to whichever provider you have configured, but Ritemark itself never sees your content or your prompts. There is no Ritemark account, no cloud sync, no telemetry on what you build.

Your flows are saved as JSON files in your project, so they live alongside your content in version control. Share them with teammates by committing them to the repo.

Download Ritemark and build your first AI pipeline in minutes.